Thinking Like a SysAdmin

Taking RTFM to heart · June 22, 2021

As I regain some semblance of myself, and my free time, I'm going to try and ramp back up the blog posts. I honestly missed writing them a lot. It's a great outlet, and I know it's a really good way to share some of the technical things I can't really share with anyone else. So despite posting something literally this week, we're back at it again!

I absolutely destroyed my LAN

Yeup, that sums it up. We can probably end the post here. Quite frankly I screwed my network up, and then suffered for months chasing gremlins out of it. It messed up video conferences, LAN routing, wireless connectivity, and drove me up the wall because I couldn't for the life of me figure out why. Every time I would "fix it" other strange issues would pop up, or I'd get stuck cursing at my computers for not working.

I'm sorry computers, the problem was actually between the chair and the desk.

Symptoms

So the issue at heart here was primarily LAN routing. For some reason some computers on my LAN could talk to each other, some couldn't. These clients would change every time I would look into it. Sometimes I could ssh into anything from my droid, most of the time I was completely and utterly locked out. Other times I couldn't hit anything from any computer, only the router worked reliably for access, and using it as a proxyjump is just annoying when there shouldn't be isolation inside the LAN. So what do we even do about any of that?

Obviously we cart off some of the systems to a different network for testing, I fortunately have a couple of Mikrotik maplites, so I spin one up as a simple AP, and lo and behold the droid can talk to my netbook, but an Ubuntu system refuses to communicate. Weird. Rebuild the AP, same thing. Cart the systems back off to the actual LAN, steady disconnect between Ubuntu and Alpine systems. Obviously my problem is with route handling on the Alpine system right? This makes the most sense because the network stack was replaced recently in Alpine 3.13 with ifupdown-ng, and all of my /etc/network/interface configurations are using the old style configuration.

Except they aren't. My netbook is using networkmanager, the droid is some hacky shell script that's needed to get wlan0 to even exist, and the Ubuntu systems are all network manager. So scrap that, maybe ip route has info?

On Alpine:

~|>> ip route

default via 192.168.88.1 dev wlan0 proto dhcp metric 600

192.168.88.0/24 dev wlan0 proto kernel scope link src 192.168.88.249 metric 600

On Ubuntu:

default via 192.168.88.1 dev wlp1s0 proto dhcp metric 600

169.254.0.0/16 dev wlp1s0 scope link metric 1000

192.168.88.0/24 dev wlp1s0 proto kernel scope link src 192.168.88.222 metric 600

Err no, not that either, seems to be more or less the same between hosts. At which point I started to poke the router.

[corravi@ENGR] > ip route print

Flags: X - disabled, A - active, D - dynamic, C - connect, S - static, r - rip, b - bgp, o - ospf, m - mme,

B - blackhole, U - unreachable, P - prohibit

# DST-ADDRESS PREF-SRC GATEWAY DISTANCE

0 ADS 0.0.0.0/0 XXX.XXX.XXX.XXX 1

1 ADC XXX.XXX.XXX.XXX/20 XXX.XXX.XXX.XXX ether1 0

2 ADU 192.168.88.0/24 192.168.88.1 bridge 0

The LAN gateway is unreachable? But I can get out just fine, perfectly fine in fact. Like enough to stream video, or even play stadia games. The problem is definitely something to do with the local network, it's obvious. But short of nuking the firewall and starting over, how do I figure out what's wrong? Probably at this point the correct course of action is to just accept that the firewall configuration is bad somehow, and that it really doesn't matter what's wrong, just knowing that it IS wrong is enough to address the problem. And that's probably enough for a lot of people. Normal people would just grab a backup, reset the entire thing and start over from scratch, maybe even follow a tutorial written by someone smarter than me. But not me, oh no, I have way too many years of Sysadmin experience to just idly sit by. I don't want a fix, I'm too far gone at this point, I need to comprehend the abhorrent levels of misconfiguration I've inflicted upon myself. My pride is on the line, plus applying a fix is a temporary patch, anyone who has played this game long enough knows that if you have to fix it once, you will have to fix it again in the future. And even worse, if I don't understand where I broke what, I'll be the idiot breaking things in the future.

Queue the troubleshooting montage!

Off the top of my head I can think of a few great tools for dealing with this kind of an issue, a Zabbix instance to pull in SNMP data from the firewall and AP, and ntop to filter the netflow data from the firewall itself. So I grab a spare NUC off the shelf, slap a couple gigs of ram into it and a little 32GB SSD, and rush off to configure things. For anyone who hasn't setup a Zabbix server before, this guide on the Alpine Wiki is an excellent resource. Ntop on the other hand wasn't as quick a deployment, apparently the package was abandoned after a while, replaced with ntop-ng. Some effort from the Alpine maintainer appears to have been made to port ntop-ng over, but for whatever reason neither package was kept up with. I might circle back around and adopt both packages, but I worry that something as old as ntop is likely just a CVE magnet.

So I dug up the old unmaintained package from the aports repo. Good enough for internal use I guess. Abuilt that sucker and threw it onto the NUC. Short term problems obviously call for short term deprecated software solutions right?

Once I had what I needed the configuration was a couple of simple steps. On the firewall and CAP to enable snmp and netflow we just need to set the following:

/snmp/community/add name=Enigma addresses=192.168.88.25/32 read-access=yes write-access=no

/snmp/set trap-community=Enigma engine-id=Enigma enabled=yes trap-version=1

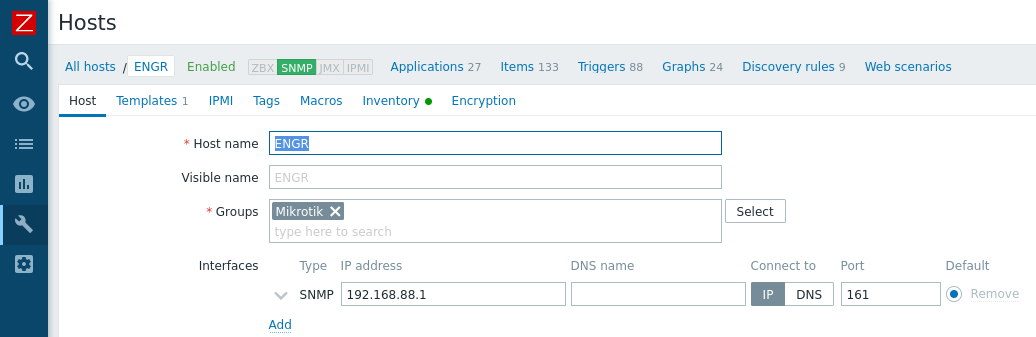

Nothing to it really, of course if this were a long term installation we'd also enable security settings, and probably user snmpv3 instead of v1, but I just want data. Adding the Mikrotik to Zabbix is additionally as easy as setting the host configuration like such:

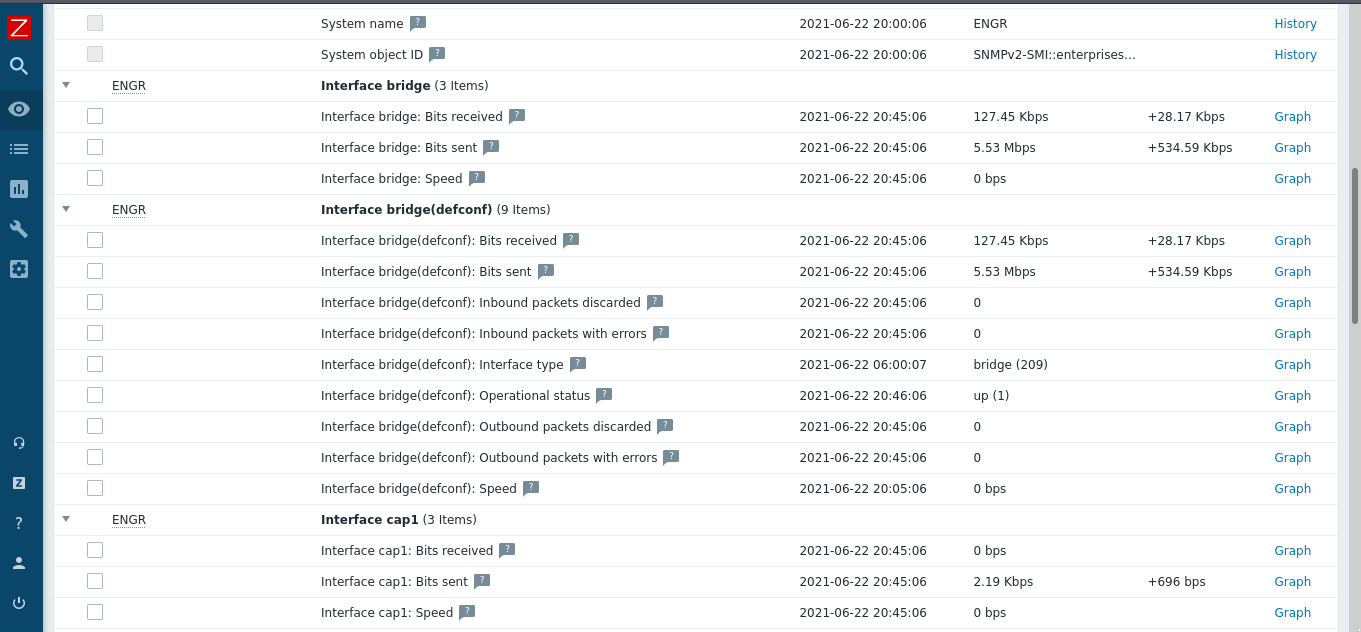

Admittedly Zabbix was far more useful than ntop. Ntop confirmed for me that it was old, and I probably should have spent more time trying to get ntop-ng packaged correctly, but in its defense it did show me that the network traffic seemed free and clear in transit, at least insofar as the router was concerned. It passed traffic all day, whether it be wireless or wired. Zabbix on the other hand, oh Zabbix was the right amount of pew pew flashy graphs to really drill home the problem.

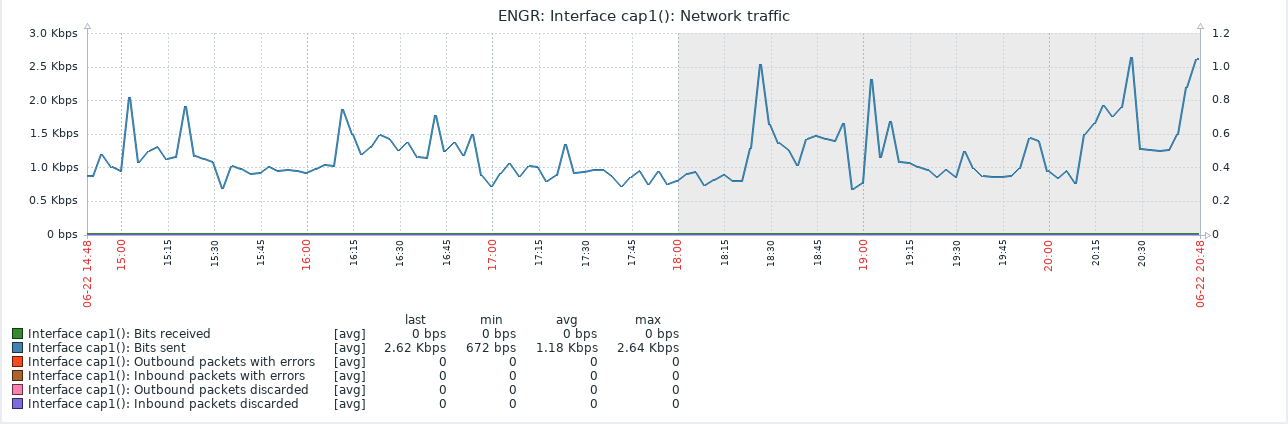

So that little snippet is the traffic flow for one of the CapAC's wireless interfaces. A quick glance shows that everything seems to just be working, no dropped packets, nothing weird with throughput. This is how everything looked in Zabbix, and on the Mikrotik, and even in tcpdump if done from the right place. Of course, it was pretty obvious once I started to actually look at what was going on from the wrong side of things.

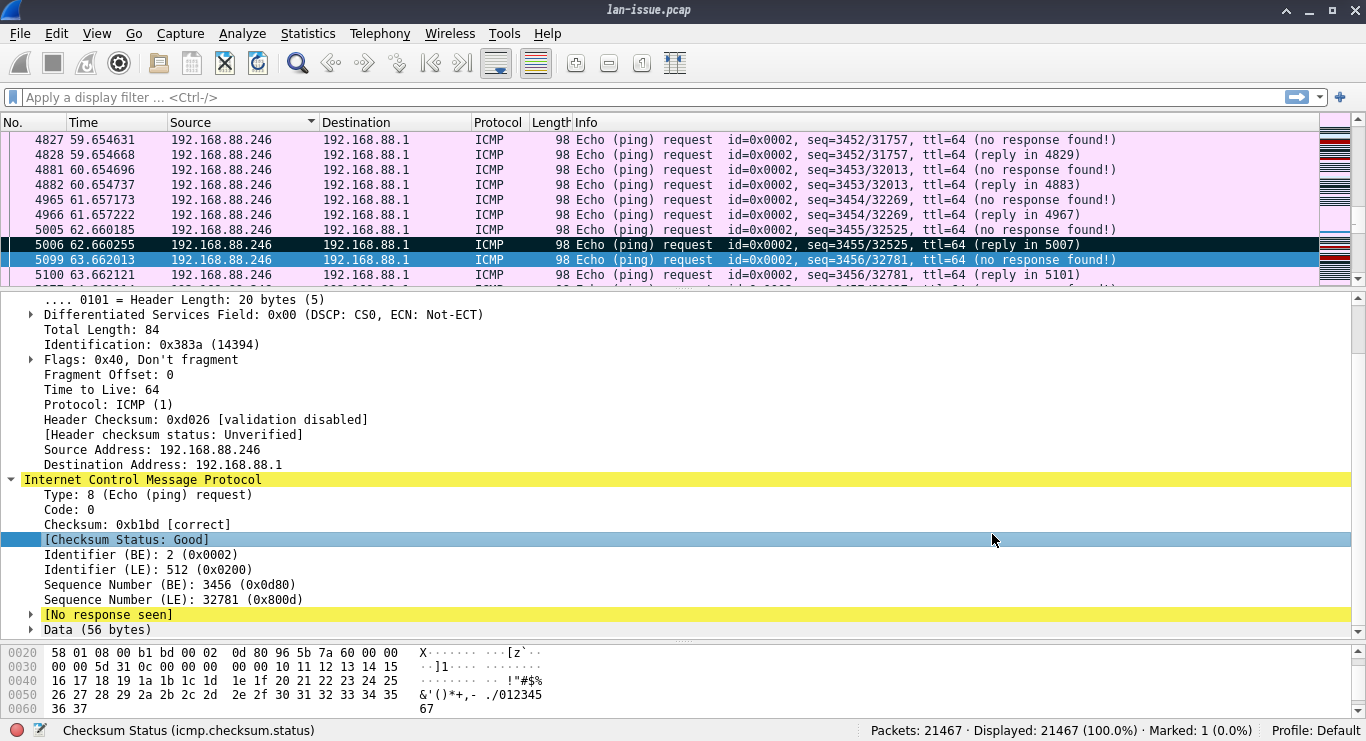

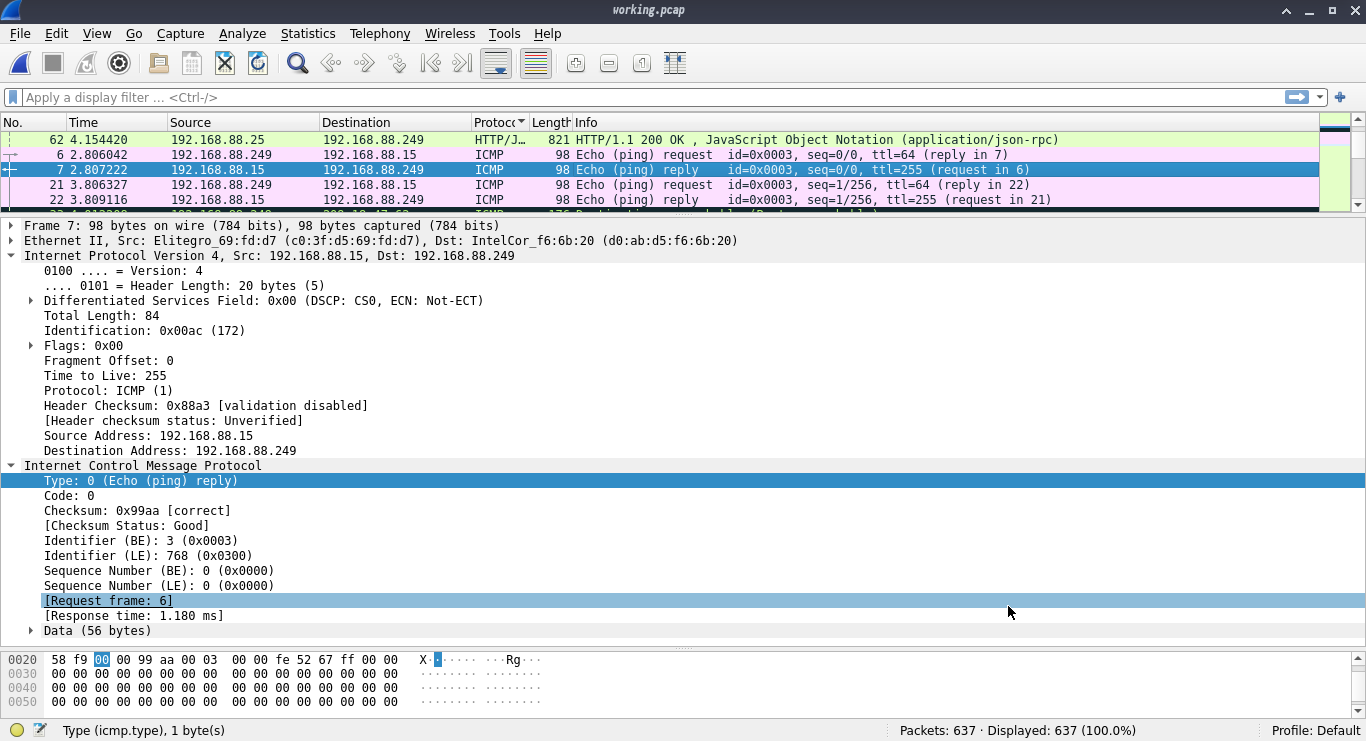

So even a little snippet of ICMP traffic isn't getting anywhere. Well, it's getting somewhere, just not coming back. And ICMP traffic should look something like this:

So we can reach out, but not back in. And this makes sense, I was able to reach the net, read my blog, SSH to my servers in digital ocean, but on my LAN I couldn't load pages, or SSH into anything, but drawterm still worked to get into my Plan9 server. Actually I could still ping that too.. That's when it dawned on me, the Plan9 server is hard wired directly into the firewall, everything else was wireless.

What even broke?

Well now that I've regaled you with my troubleshooting methods, I can tell you the fix. I sat down, looked at my configuration and read the documentation. Yes that's right, the problem was obvious after reviewing the manual and my own firewall configuration. Here try it out, take a quick look at the Mikrotik CapsMan page and look for local-forwarding and client-to-client-forwarding. Just read the description real quick and then take a gander at the configuration that was on my capsman router.

[corravi@ENGR] /caps-man configuration> print

0 name="Enigma-cfg" mode=ap ssid="Enigma" country=united states

distance=indoors security=enigma-sec

security.authentication-types=wpa2-psk security.encryption=aes-ccm

security.group-encryption=aes-ccm datapath.client-to-client-forwarding=no

datapath.bridge=bridge datapath.local-forwarding=no

I'm sure it's pretty obvious, with no form of local forwarding enabled nothing on my wireless LAN could speak to anything. All the weird firewall rules, packet queues, and strange routing hacks I had tried to patch this with just created a tarpit for me. I over-complicated my own problem by trying to work around it and assuming that "because there's an SSID being broadcast the wireless configuration is perfect". Oof, the amount of frustration that I felt towards myself, cannot be described, but part of me is happy too. This is user error plain as day, which can be fixed by being more meticulous.

And honestly it's a great reminder to myself that I'm not perfect, nothing I build is perfect, and I owe it to myself and others to review my own work with the same scrutiny that I would give to someone I didn't trust. Because my own willingness to jump to conclusions when troubleshooting was my downfall in this little saga. And it's not as though I'm unaware of these configurations either, I've setup numerous CAPsMan wireless networks. But I'm forgetful, and I don't always treat my own infrastructure as I should, probably because by the time I get to work on these things I've already exhausted myself mentally keeping things ship shape at work. So yeah, that's it, RTFM, just because you can whip together fancy monitoring solutions and troubleshoot a problem doesn't mean that you should have to do those things just to have a working home network.

But hey, on the positive side, I have a swanky monitoring NUC for my LAN now which ends up being a bit of a perk in and of itself.